<< ️(AA) introduce materiomusic as a generative framework linking the hierarchical structures of matter with the compositional logic of music. Across proteins, spider webs and flame dynamics, vibrational and architectural principles recur as tonal hierarchies, harmonic progressions, and long-range musical form. >>

<< ️(They) show how sound functions as a scientific probe, an epistemic inversion where listening becomes a mode of seeing and musical composition becomes a blueprint for matter. >>

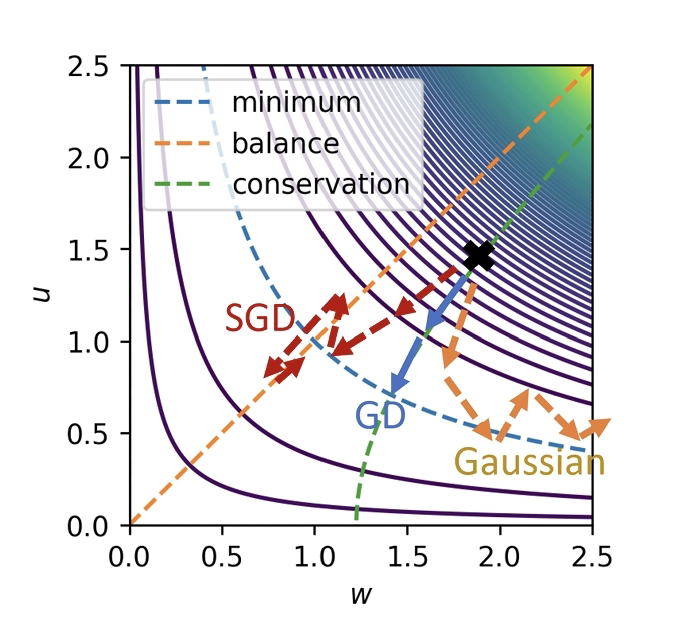

<< ️Selective imperfection provides the mechanism restoring balance between coherence and adaptability. >>

<< ️(They) show how swarm-based AI models compose music exhibiting human-like structural signatures such as small-world connectivity, modular integration, long-range coherence, suggesting a route beyond interpolation toward invention. (They) show that science and art are generative acts of world-building under constraint, with vibration as a shared grammar organizing structure across scales. >>

Markus J. Buehler. Selective Imperfection as a Generative Framework for Analysis, Creativity and Discovery. arXiv: 2601.00863v1 [cs.LG]. Dec 30, 2025.

Also: jazz, music, clinamen, chaos, defect, error, mistake, noise, in https://www.inkgmr.net/kwrds.html

Keywords: gst, jazz, music, clinamen, parenklisis, selective imperfection, defect, error, mistake, noise, invention, long-range coherence, chaos.

FonT: apropos of 'selective imperfection', at one time I was surprised by the structure of a temple gate in Kyoto where one of the elements is installed upside down.